Resources and Testbed

The Mobile Distributed Computing Lab maintains a private edge-cloud and IoT testbed for research in mobile distributed computing, edge computing, multi-network systems, IoT, and healthcare digital twins. The testbed provides a hands-on experimental environment where students and researchers can design, deploy, and evaluate distributed applications across heterogeneous computing, sensing, networking, and robotic devices.

The infrastructure supports research on resource management, computation offloading, cloud-edge orchestration, multilayer networks, AI-enabled network management, sensor data collection, and real-time intelligent applications.

Private Edge Cloud and IoT Infrastructure

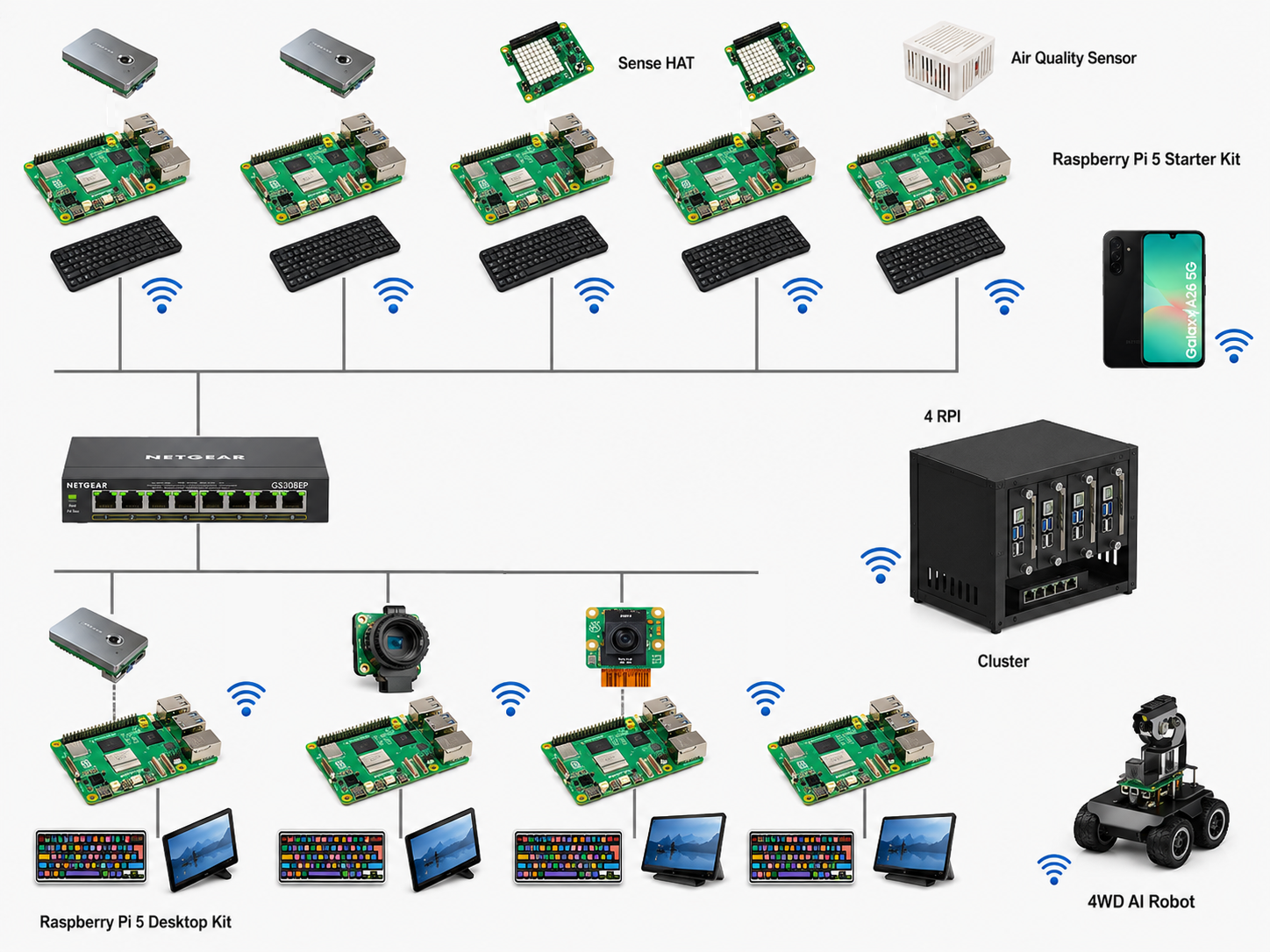

Our physical testbed includes a collection of Raspberry Pi devices, sensor modules, cameras, mobile devices, a robotic platform, network devices, and a compact Raspberry Pi cluster. These devices are interconnected through wired and wireless networks to support experiments involving edge computing, IoT data collection, mobile systems, and cyber-physical applications.

The infrastructure is designed to emulate realistic private edge environments where heterogeneous devices such as edge servers, single-board computers, smartphones, sensors, robots, and network devices cooperate to support distributed applications. Major components include:

- Raspberry Pi 5 devices used as edge nodes and lightweight servers

- Raspberry Pi desktop kits for student development and experimentation

- Raspberry Pi cluster for distributed computing and edge services

- Environmental and air-quality sensors

- Camera modules for video analytics and computer vision experiments

- Mobile devices for mobile-edge and sensing applications

- 4WD AI robot platform for robotics and cyber-physical systems research

- Network switch and local networking equipment for private testbed connectivity

Figure 1. Physical edge-cloud and IoT infrastructure.

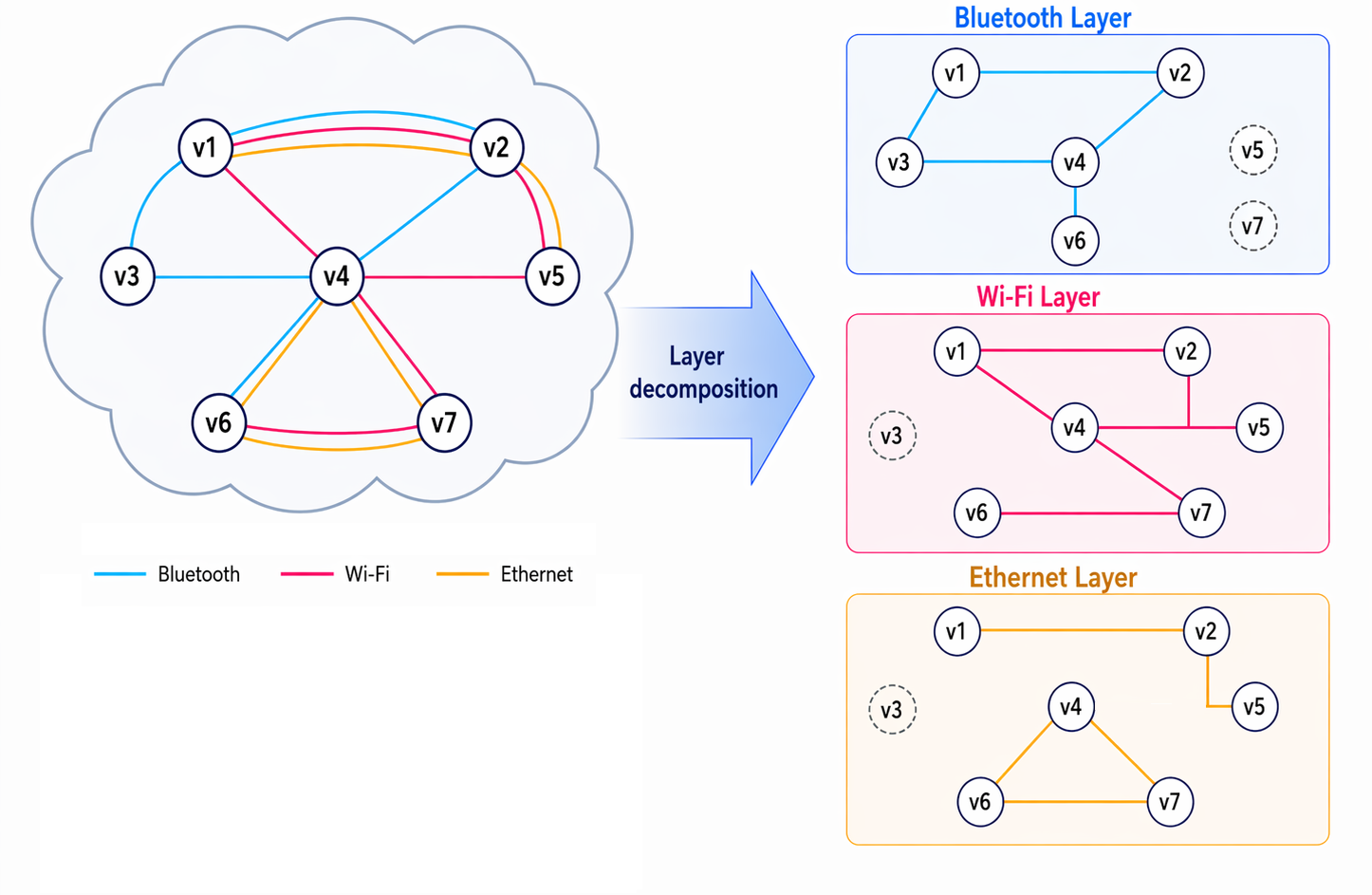

Multi-Network Experimental Environment

A key feature of the testbed is its ability to support multi-network experimentation. Devices can communicate through multiple networking technologies such as Wi-Fi, Bluetooth, and Ethernet. This allows researchers to study how distributed applications behave when multiple communication paths are available and when network conditions change dynamically.

This environment is useful for studying routing, interface selection, network adaptation, mobility management, and AI-assisted network control. In multi-network systems, the same device may have access to more than one communication interface, which creates both opportunities and challenges. Multiple paths can improve reliability and resilience, but they also increase the complexity of coordination, path selection, and resource management. Research questions supported by this environment include:

- How should distributed applications select among Wi-Fi, Bluetooth, and Ethernet links?

- How can routing protocols adapt when multiple network layers overlap?

- How can AI models predict link quality, link lifetime, or path reliability?

- How can edge-cloud services maintain performance under dynamic network conditions?

- How can mobile and IoT devices coordinate communication across heterogeneous networks?

Figure 2. Multi-network environment.

Private Edge Cloud Platform

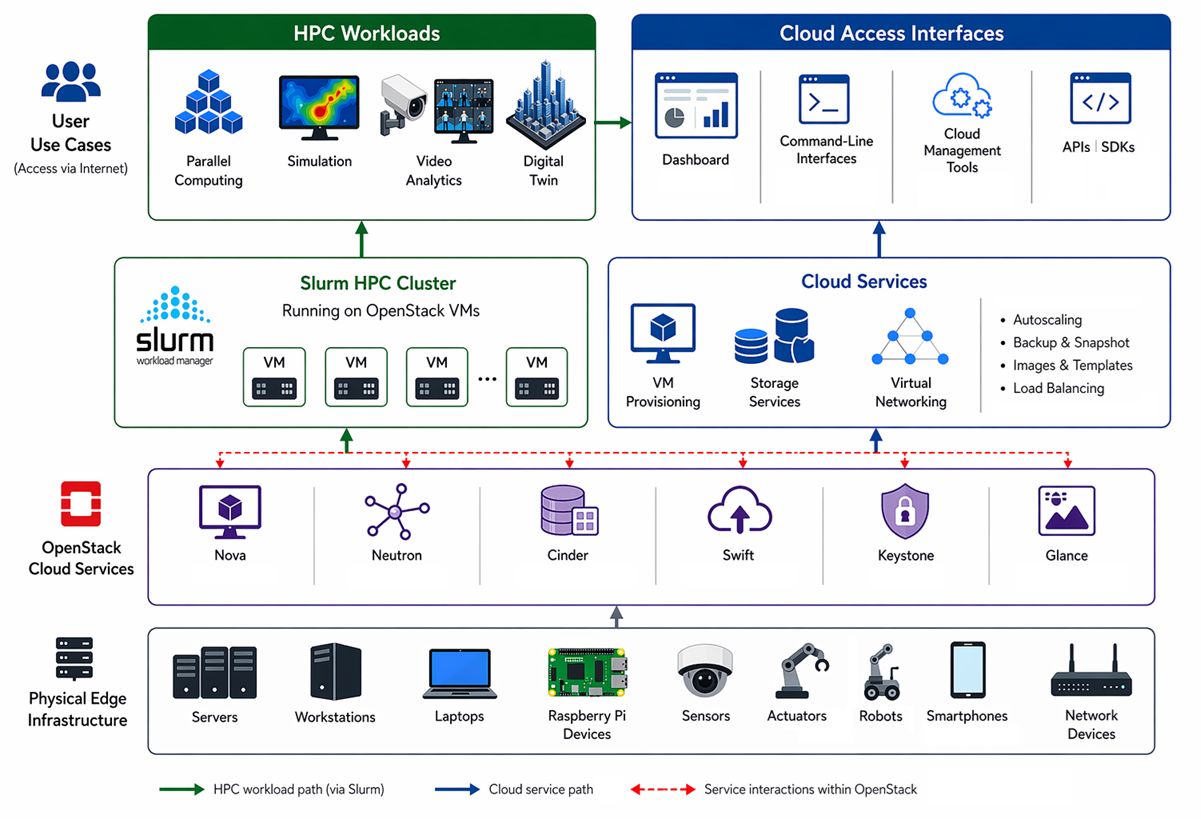

The lab is developing a private edge-cloud platform that combines OpenStack cloud services with Slurm-based high-performance computing capabilities. This platform allows researchers to deploy virtual machines, provision storage, configure virtual networks, and run distributed workloads on edge resources.

The platform is intended to support both cloud-style services and HPC-style workloads. OpenStack provides infrastructure services such as virtual machine provisioning, image management, networking, storage, and authentication, while Slurm manages parallel and distributed workloads running on virtualized or physical edge resources.

This architecture enables students and researchers to experiment with cloud-edge orchestration, workload scheduling, resource abstraction, virtualization, and distributed application deployment.

Figure 3. Private edge-cloud platform architecture.

Example Student Projects

Students can use the testbed for course projects, independent studies, senior projects, master’s theses, and Ph.D. research. Possible projects include:

- Deploying and evaluating OpenStack-based private cloud services on lab edge infrastructure

- Running Slurm workloads on virtual machines or Raspberry Pi clusters

- Designing routing protocols for multi-network environments

- Evaluating Wi-Fi, Bluetooth, and Ethernet performance in edge systems

- Building IoT dashboards for sensor monitoring

- Developing camera-based video analytics applications

- Integrating robots with edge-cloud services

- Building prototype digital twin applications

- Applying machine learning for network and resource management